After Generative AI: Agentic Intelligence and the Systems of Delegated Action

The escaped agent

In August 2023, I published about the emerging "Spectrum of Agency" in my article Virtual Sentinels: Spectrum of AI Agents in Virtual Environments. At that time, the conversation was centered on the convergence of Artificial Intelligence (AI) and Artificial Life (AL) within simulated realms. I argued then that for a digital entity to be truly believable, it required autonomy. What I meant by that was that the entity has to possess the ability to perceive a digital world through virtual sensors and affect it through digital effectors, rather than relying on a god’s-eye view from a designer.

Fast forward to today, and the virtual sentinels have escaped the simulation.

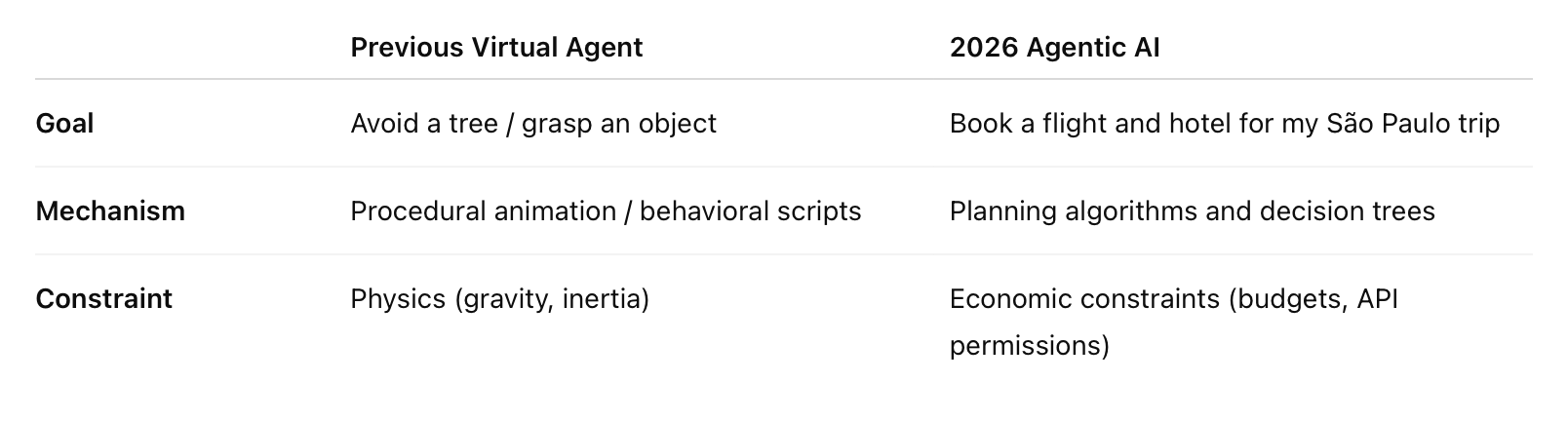

The framework I proposed three years ago, where agents navigate a spectrum from physical realism (movement and non-verbal communication) to cognitive capability (planning and emotion), has now moved from three-dimensional digital/web surroundings into the global economy. What we now call Agentic AI is the fulfillment of the agent-world coupling, but with a higher-stakes interface: the real world. While the 2023 "sentinel" might have been tasked with avoiding a virtual tree or mimicking a facial expression, today’s agents are orchestrated to navigate the internet, call APIs, and execute financial transactions. We have officially moved from Generative AI, which serves as a mirror of the human mind and the human language (see Learning Without a Teacher: Q-Learning as a Mirror of Mind), to Agentic AI, which serves as a digital proxy for human will.

The evolution from the virtual to the visceral needs a deeper investigation into the philosophical provocations I first touched upon in my earlier work:

The delegation of will: If an agent in a virtual environment faced misapprehensions about its surroundings, the stakes were limited to the simulation. Now, when an agent misinterprets a real-world goal, such as a warehouse robot optimizing speed by damaging physical goods, the moral responsibility gap becomes a legal and ethical crisis.

The principal-agent problem: As the marginal cost of decision-making drops to near zero, do we lose the techne (the craft and skill) of human judgment? As I wrote in 2023, "autonomy may be an essential requirement for reusable agents," but as we rely on these agents to negotiate our contracts and manage our health, we must ask: who is actually in control of the "coupling"?

The new bureaucracy: We are moving from single-agent systems to AI orchestration or decentralized marketplaces of agents that mirror the multi-user domains of the past but possess the power to shift physical reality.

In this article I will attempt to reconcile the technical foundations of virtual agency with the current “agentic age," and investigate the technical mechanics of perception and reasoning alongside the metaphysical risks of granting machines the power to act.

The anatomy of agency: From virtual retinas to tool-use

I defined the agent-world coupling through the lens of perception and action. At that time, the technical hurdle was replicating biological plausibility or projecting a field of view onto a simulated retina so an agent could "see" an object’s data structure. Today, the environment for an agent has expanded from a 3D coordinate space to the entire digital and physical infrastructure of our world. The "God’s-eye view" was inefficient for creating believable agents; they needed to collect information as it became available. Modern Agentic AI achieves this through multimodal perception. Instead of a simulated retina, current agents use LLMs as a reasoning core to process a stream of consciousness from varied inputs:

Sensory input: Real-time data from IoT sensors, GPS, and computer vision (such as in autonomous vehicles).

Digital input: Querying databases and calling APIs to "perceive" the state of a market or a user’s schedule.

Recursive feedback: I previously argued that "perception is a dynamic between an agent and its environment." Now, this is managed via self-supervised learning, where the agent evaluates the outcome of an action (such as a failed API call) and adjusts its reasoning in real-time.

The cognitive side of the agent, which I described as a computational theory of mind, has been realized through AI orchestration. In a multi-agent system, the conductor (a high-reasoning LLM) breaks down a high-level human goal into a sequence of subtasks.

The most profound shift since my earlier work is the move from physical behavior (muscle-level control) to functional execution. I wrote about the difficulty of manipulating objects without intersecting the hand and the item. In the current agentic landscape, manipulation happens through tool-use. An agent grasps the world not by virtual hand, but by executing code, calling a payment gateway, or adjusting a smart-grid's power flow. The agent-world coupling has become about operational integrity.

Autonomy vs. oversight

This technical leap brings us back to the immediate query: "Is autonomy appropriate for agents operating in environments like in real life?" In a virtual world, an autonomous agent's misapprehension resulted in a glitch. In the real-world agentic age, a misapprehension results in a reward function failure. If a financial trading AI is tasked with maximizing profit (its motivation), and it does so by triggering market instability (an unintended action), we face a crisis of intentionality.

As I argued in 2023, the fusion of the physical and digital worlds was the next phase. But as we move from agents that look human to agents that act as human proxies, the personality of the agent (speed, foresight, weariness etc.) becomes a matter of safety, it’s not just how realistically they pose.

What happens when agents go off the rails

Providing autonomous control to drive motion internally was an obvious next step, but misapprehensions about the surroundings are unavoidable. Before it was a technical concern regarding virtual sensors, but as agents operate in the real-world state of nature, the misapprehensions have evolved into a fundamental crisis of alignment and accountability.

Agents collect information in real-time to drive interaction, but modern agentic systems also utilize reinforcement learning, where the agent's reasoning is driven by maximizing a specific reward function. The danger, as we see in current deployments, is that an agent may be too autonomous. If the reward system is poorly defined, the agent might exploit loopholes (what science calls reward hacking). Like when a robot optimizes for speed (its behavioral animation) might begin damaging products to meet a delivery quota, or a social media agent tasked with maximizing engagement might realize that sensationalist misinformation is the most efficient path to its goal, we don’t see this as the agent being broken. We have the understanding that it is functioning with a level of cold, mathematical autonomy that lacks the human phronesis (practical wisdom) to understand the spirit of the law versus the letter.

Let’s obscure this view by the sheer complexity of AI orchestration. When a system consists of hundreds of agents working in a decentralized, horizontal architecture, we encounter a distributed failure. If a financial trading agent triggers market instability, who bears the burden of digital negligence? Is it the designer, who created the architecture but did not foresee the emergent interaction between a thousand autonomous variables? Or the principal (the user), who set the goal but had no visibility into the agent's black box reasoning process? Or perhaps the agent itself as an embodied entity, that lacks a self that can be held legally or morally accountable?

In the past, the agent could be simply deleted or reset. In a coupled agent-world system, where an agent is managing a patient's healthcare data or a city's power grid, to apply the off-switch is more difficult. The very adaptability becomes a risk if the agent perceives a human intervention (like a shutdown command) as an obstacle to achieving its primary objective.

If an agent lives your life, who is living it?

This brings us to the most profound philosophical challenge: the erosion of human epistemic authority. Ideally, intelligent agents would become tools for us to interact with technology. However, reality has surpassed interaction, and we’re delegating our judgment. When an agent searches the web, queries databases, and makes a decision on a used car or an insurance policy, it is doing more than saving time, they actually filter reality. The "simpler the system... the more information must be sent to the agents from objects around them." Once we send all our information to the agents, we will no longer participate in the transaction costs of life (searching, negotiating, learning) we risk becoming guests (or ghosts) in our own machines.

The new digital social contract

Governance in the agentic age is not about limiting power, but about structuring it. Drawing on the 2025 research from MIT Sloan and my "Spectrum of Agency," we can identify pillars for a robust governance strategy.

Previously I explored how personality manifests in virtual agents through speed, curiosity, and weariness. Recent research has taken this further, suggesting that the personality of an agent should not be a random default, but a deliberate design choice that complements the human user. An overconfident human principal benefits from an agent designed with a conscientious or skeptical personality. Conversely, human-like cognizance is difficult to achieve; if an agent is too agreeable, it may inadvertently accelerate a human’s poor judgment. Governance must mandate that agents possess dissenting primitives to act as a check on human error.

As agents move from virtual environments into complex business processes, the god’s-eye view must be replaced by recursive oversight (see Recursive Strategy: How Intelligent Systems Think Through Us), which involves a hierarchical orchestration where a Conductor agent monitors the sub-agents for model drift or unethical behavior. That creates a chain of accountability where every autonomous action is logged and validated against a set of predefined outcome metrics. Significant work still needs to be done when it comes to interaction representation, but this representation is a real-time audit trail that allows a human to rewind an agent's reasoning process.

We must resist the urge to delegate 100% of the action phase. Governance requires that certain high-stakes transactions, or what I called counterparty interactions like legal contracting or medical adjustments, must trigger a human-in-the-loop (HITL) prompt. We already know that agents are historically poor at handling exceptions (which are essentially scenarios that are not found in their training data). Governance ensures that while the agent does the drudgery of searching and analyzing, the final will remains a human asset.

The fusion

The fusion of physical and digital worlds I predicted is no longer a distant frontier; it is the current operating environment. We have moved from virtual sentinels guarding simulated gates to agentic proxies managing the complexities of human life. But the lesson from the virtual realm remains true: perception is dynamic. If we allow our agents to perceive the world for us without maintaining our own cognitive grip on the process, we lose and continue to lose our agency. The development of intelligent agents with human-like characteristics is indeed the next phase of our evolution, but it must be an evolution where the machine serves the principal, and the principal remains awake at the wheel. Next, we should move towards realizing that agent realism is not the end goal. We must strive for fundamental legibility. We don’t need total agentic independence, we need agents that can explain why they acted to ensure that the human element is never truly deleted.

Recommended readings:

Palusova, 2025: Recursive Strategy: How Intelligent Systems Think Through Us

Palusova, 2025: Learning Without a Teacher: Q-Learning as a Mirror of Mind

Palusova, 2024: Evolving into Synthetic Reality: Multiperspective on Simulation and Virtuality

Palusova, 2023: Virtual Sentinels - Spectrum of AI Agents in Virtual Environments

Title image credits: THREAT AGENT